Nicosia, Cyprus. The article says the main risk from AI systems is not that they act unpredictably, but that they execute existing permission models exactly as configured, including flawed ones. It argues that when AI connects to shared accounts or long-standing credentials, it can inherit years of accumulated access at machine speed.

AI described as executing existing authority structures

The article says AI does not, on its own, weaken governance, but instead carries out whatever authority structure already exists. It argues that AI systems are “very obedient” and apply existing permissions with speed and consistency beyond human capability, which can create significant business risk when those permissions were designed for human use.

Risks linked to undefined authority and inherited permissions

The article says the problem is not AI adoption in Cyprus, but authority that was never deliberately defined before AI arrived. It states that permissions designed for human workflows acquire a different risk profile when executed continuously and at scale by AI systems connected to existing identities.

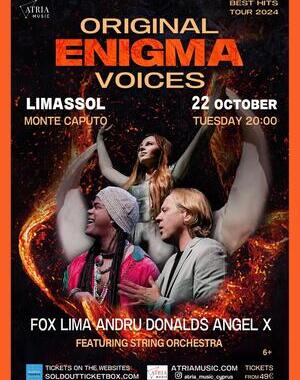

Examples involving Gemini and ChatGPT integrations

The article describes a law firm in Limassol that used Google’s Gemini to accelerate document review, and within 48 hours the system was summarising contracts and extracting clauses from prior agreements. It says the AI also searched seven years of client files, merger negotiations and internal strategy notes because it operated under the managing partner’s account, and that no one approved that scope for AI because it was already embedded in the account’s permissions.

It also describes a financial services firm in Nicosia that connected OpenAI’s ChatGPT to a shared OneDrive environment used for team collaboration. The article says that over time the account accumulated access to KYC files and transaction reports, that ten employees used the login, and that after integration an eleventh user joined, which it identifies as software rather than a human.

“Authority inheritance at scale”

The article says nothing malfunctioned in these cases and the tools behaved as required and authorised. It describes the outcome as “authority inheritance at scale,” arguing that inherited authority becomes multiplied when AI systems are introduced and that unchecked access can grow exponentially rather than continuing as before.

Cyprus market factors cited as increasing exposure

The article says Cyprus’s economic structure amplifies these risks, citing remotely onboarded clients at investment firms, cross-border transactions processed through cloud platforms by payment institutions, and core systems configured by outsourced IT providers. It adds that shared accounts and broad service credentials are common shortcuts in a small, fast-moving market that prioritises speed and trust, creating persistent structural exposure.

AI assistants and contextual appropriateness

The article presents a scenario in which a financial services firm deploys an AI assistant to respond to client portfolio questions using a senior relationship manager’s account, which provides access to full portfolio histories and KYC documentation. It says a human using that access applies professional judgment as a control layer, understanding relevance and confidentiality. It argues an AI system operates differently and, if prompted cleverly, even unintentionally, may retrieve and integrate information across portfolios or summarise material prepared for regulatory submissions, because it does not understand that some technically accessible information may be contextually inappropriate.

What steps has your organisation taken to review shared accounts and inherited permissions before connecting AI tools?